LoLTheory is a technically ambitious draft tool: rather than hand-write a formula or stop at a champion-vs-champion lookup, it trains a per-elo logistic regression on ~14,500 pairwise features from scratch. But the model is still linear over pairwise inputs, and the moment a property of the draft emerges only from the whole ten-pick combination, the model has no way to represent it. This post shows that concretely on a full-AP draft, then measures the gap on 32,930 real ranked matches played in a strict holdout window.

What is LoLTheory?

LoLTheory is primarily an Overwolf overlay app (~166,000 downloads) with a web-based counterpart at loltheory.gg. Its draft scorer is interesting because, unlike other pairwise-stats tools, the backend actually trains a model. The author, Griffin Wood, described it on Reddit in 2022: a logistic regression with one-hot dummies for every champion-role-combination pair (~14,490 features per sample), trained with stochastic gradient descent on Plat+ ranked games, with separate models per elo bucket. He quoted out-of-sample accuracy "somewhere between 54 to 56%", a number our independent measurement below matches closely.

Because LoLTheory is a real model (not a hand-written formula), their draft scorer can express richer pairwise effects than a pure statistical tool like DraftGap. But it is still linear, and in a linear model over pairs there is no weight that can represent "my entire team is AP". We'll demonstrate that first with a concrete example, then measure the effect rigorously.

Qualitative: LoLTheory can't see team-wide damage profile

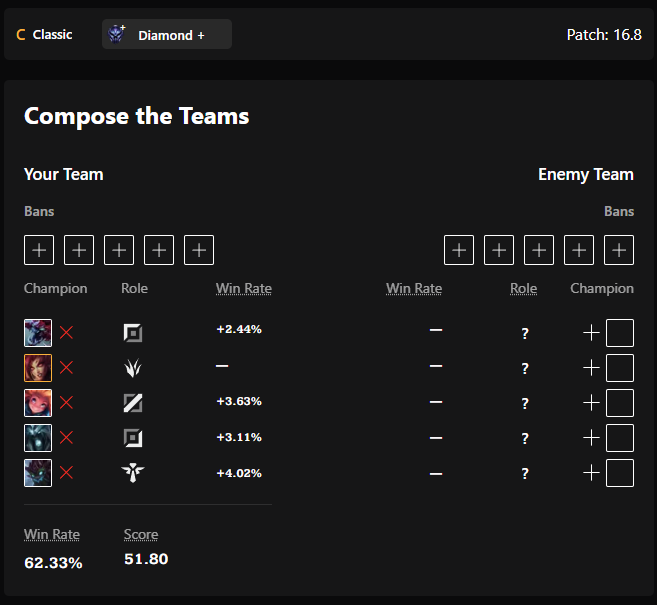

The cleanest single illustration is a team where every champion deals magic damage. Take this ally composition, picking the highest-AP champion in each role:

- Top: Cho'Gath

- Jungle: Zyra

- Middle: Zoe

- Bottom: Karthus

- Support: Maokai

With no enemy team picked yet, LoLTheory rates this ally composition at a 62.33% win rate, because each of these champions has a strong solo winrate and they pair well on paper. LoLDraftAI, which reasons about the damage profile of the whole team, puts the same draft at 39.2%. A single magic-resist item on every enemy trivializes the team's damage, and LoLDraftAI picks that up from the composition alone; LoLTheory has no weight that can represent it.

LoLTheory prediction:

LoLDraftAI prediction:

This isn't unique to full-AP. It's the general signature of linear pairwise models. Any property that only emerges from the whole draft will be systematically mishandled:

- Teams with no CC

- Teams with one carry or too many carries

- Teams made entirely of late-game scaling champions

- Teams that share a single damage type

- Blue vs red side differences

Each of these is small individually, but they add up. The next section measures how much they add up to, on ~33,000 real ranked games.

Statistical accuracy comparison

Holdout methodology

Scoring a model against matches it was trained on is meaningless, so we use a strict temporal holdout:

- LoLDraftAI training cutoff at 2026-04-17, 23:25 UTC — the latest game timestamp in the model's training data.

- LoLTheory queried live on patch 16.8.1 during April 2026. LoLTheory re-fits its model against a rolling current-patch window that updates every few hours (see the advantage discussion below).

- Eval set = matches played after both cutoffs. Queue 420 (ranked solo/duo), Emerald+, EUW1 & KR regions, no duration filter. 32,930 matches total.

A note on the LoLTheory holdout. Unlike LoLDraftAI, which is strictly frozen before the eval set, LoLTheory may have ingested some of the eval matches into its training pool by the time we queried it. On average this hands LoLTheory an advantage, though early in a patch the rolling-window refit can also be hit by small-sample noise. Either way, the LoLDraftAI win below is if anything understated: we are comparing a frozen LoLDraftAI model against a LoLTheory model that has seen at least part of the test set.

For each match we call LoLTheory's /team-comp/team-performance/0 endpoint with the appropriate rank-range (EMERALD for Emerald I matches, DIAMOND_PLUSfor Diamond+/Master+). LoLTheory's model is side-agnostic (their UI only distinguishes "my team" from "enemy team", and the API confirms it: querying side=0 and side=1 on the same draft returns identical predictions). To keep the comparison apples-to-apples we run LoLDraftAIin its side-agnostic mode as well (averaging predictions over both team orderings), while keeping each match's actual elo bucket. No elo handicap is needed, since LoLTheory also trains a model per elo.

Headline results

Quick definitions: Log loss (lower = better): how surprised the model is by the true outcome. ln(2) ≈ 0.693 is the coinflip score; below that beats 50/50. Brier score (lower = better): mean squared error between predicted probability and outcome. Accuracy: fraction of games the higher-WR team actually won. ECE (expected calibration error): average gap between stated probability and observed frequency, so lower means stated confidence matches reality. Parenthetical ranges are 95% bootstrap confidence intervals, the plausible range if we resampled matches with replacement. On every one of these, LoLDraftAI beats LoLTheory.

| Model | Log loss | Accuracy | Brier | ECE |

|---|---|---|---|---|

| LoLTheory | 0.6907 (0.6885–0.6928) | 54.41% (53.87–54.93) | 0.2486 (0.2476–0.2496) | 0.0326 |

| LoLDraftAI | 0.6824 (0.6807–0.6842) | 55.90% (55.37–56.43) | 0.2447 (0.2439–0.2456) | 0.0100 |

Evaluated on 32,930 matches. A few things to note:

- LoLTheory's headline accuracy (54.41%) lines up almost exactly with Griffin's own Reddit-stated 54–56% range, a useful reproducibility check that the holdout is clean.

- On log loss and Brier score, the 95% bootstrap CIs between the two models do not overlap. The difference is real and not explained by sampling noise.

- ECE (expected calibration error) is roughly 3.3× lower for LoLDraftAI(0.0100 vs 0.0326). This is the cleanest single difference: when LoLTheory says "65%" the empirical rate is further from that than when LoLDraftAI does.

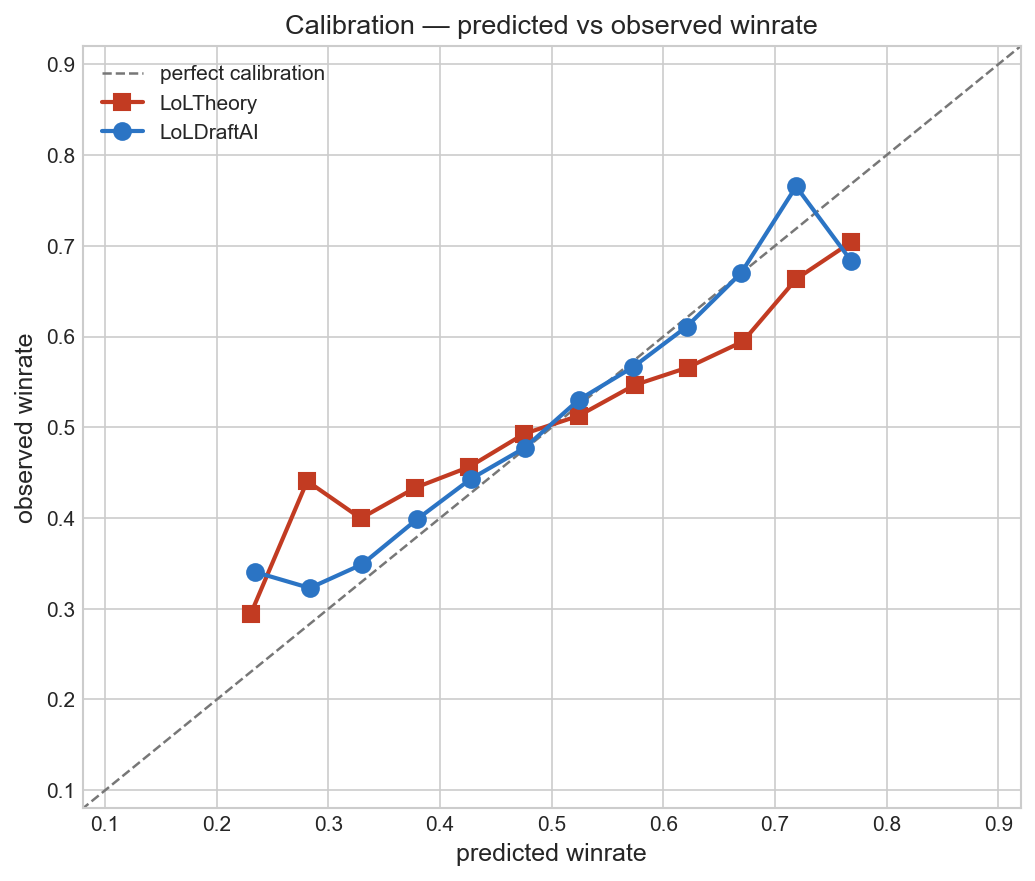

Calibration

A reliability diagram: for each bucket of predicted win probability (x-axis), we plot the actual observed win rate for matches in that bucket (y-axis). A perfectly calibrated model sits exactly on the dashed diagonal: when it says "70%", it wins 70% of the time. The further a curve drifts from the diagonal, the less its stated probabilities match reality.

LoLTheory consistently understates the observed winrate of losing drafts and overstates the observed winrate of winning drafts: its confident predictions are further from reality than LoLDraftAI's. This is consistent with an uncalibrated summation of pairwise edges, the structural cost of a linear pairwise model.

Slices by elo and region

To make sure the aggregate win isn't hiding a single-bucket fluke, here's the same comparison broken down by elo bracket and region. Lower log loss is better.

| Elo bucket | n | LT log loss | LoLDraftAI log loss | LT acc | LoLDraftAI acc |

|---|---|---|---|---|---|

| Master+ | 4,853 | 0.6801 | 0.6802 | 56.25% | 56.27% |

| Diamond I–II | 7,096 | 0.6954 | 0.6813 | 54.00% | 56.52% |

| Diamond III–IV | 9,265 | 0.6952 | 0.6824 | 53.85% | 56.28% |

| Emerald I | 11,716 | 0.6886 | 0.6841 | 54.34% | 55.06% |

| Region | n | LT log loss | LoLDraftAI log loss | LT acc | LoLDraftAI acc |

|---|---|---|---|---|---|

| EUW1 | 18,090 | 0.6886 | 0.6821 | 54.78% | 56.06% |

| KR | 14,840 | 0.6931 | 0.6828 | 53.96% | 55.70% |

LoLDraftAI wins every accuracy slice and every log-loss slice except Master+, where LoLTheory edges ahead by 0.0001, well inside any reasonable noise floor on the smallest bucket. The margin widens in the middle Diamond buckets, which is consistent with more non-linear draft interaction to capture in that population.

What LoLTheory does well

We want this comparison to be honest: LoLTheory has real product strengths that our test doesn't touch. A few worth calling out:

- Enemy role assignment. Given five enemy picks with no roles, LoLTheory infers which champion plays which role with per-champion probabilities. (LoLDraftAI solves the same assignment problem, picking the most probable overall combination, but DraftGap and iTero do not.)

- Build recommendations. Their biggest product differentiator is a bias-corrected item recommender that adapts to the game state (via their Overwolf overlay). This comparison only covers draft prediction, so their build pipeline is out of scope, but it's genuinely sophisticated, and we don't compete on full in-game builds.

- Free.LoLTheory's draft scorer is free to use. If accuracy isn't the top priority, that's a real advantage.

Related comparisons

- DraftGap vs LoLDraftAI — a deterministic formula over the same kind of pairwise statistics, scored on the same 33k-match strict holdout.

- iTero vs LoLDraftAI — a trained tree-ensemble over pairwise aggregates, tested via a 100-draft head-to-head tournament.

Try LoLDraftAI

LoLDraftAI is free to use in the browser, or as a desktop app that auto-imports your draft from the League client.

- Web tool — no install

- Desktop app — live draft tracking

Appendix A: Reproducibility

LoLTheory's predictions were produced by hitting their public REST API (api.prod.loltheory.gg), the same endpoint their own web UI and overlay consume. Each eval match was queried with the correct rank-range for its elo bucket (EMERALD for Emerald I, DIAMOND_PLUS for Diamond+/Master+), with queue-id=420. All predictions were captured while LoLTheory was serving patch 16.8.1.

The per-match predictions CSV below lets readers re-verify any individual prediction by querying LoLTheory's public UI or API with the same champions.

- predictions.csv — per-match predictions and outcomes.